Newspapers quickly reported the story. The Dutch Prime Minister and other European representatives from UK, Estonia, Latvia, and Lithuania allegedly talked on zoom with a deepfake of Leonid Volkov. The chief of staff of Alexei Navalny, Vladimir Putin’s main opponent, now in prison, denied having had this conversation, while other contacts claimed to have discussed with an impersonator. So, has Leonid Volkov been the victim of a deepfake?

❋

Past the headlines, the informed reader will quickly detect the caution of Dutch journalists who have been covering this case for several days. As a reminder, the story takes place between March and the end of April of this year when various representatives of European parliaments (from UK, Estonia, Latvia, and Lithuania) claim to have had a conversation with an individual pretending to be Leonid Volkov. The manipulation would have been carried out using a Zoom filter, similar to the one used for Elon Musk’s filter 1 DeepFake Elon Musk bombs a Zoom call which was so much talked about when it was released.

The problem here is that people claim to have seen a #deepfake. No hard evidence is provided and we cannot evaluate the threat by hearsay. Claims should be backed by evidence. Other alleged of deepfakes seem to suffer from a lack of convincing proof. https://t.co/qEh7gPmcwo

—Gerald Holubowicz (@gholubowicz) April 25, 2021

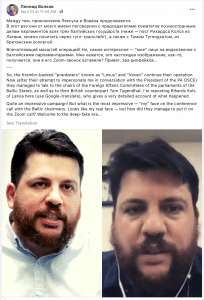

For a few days, contradictory statements and false evidence were spread on social networks. Volkov himself spoke of a deception set up with the help of a deepfake 2 the Facebook publication where Leonid Volkov himself suggests that his double could be part of the era of deepfakes https://t.co/RlInLtDAhm?amp=1. But the joke didn’t last long and the revelations published in “The Verge” 3“Deepfake” that supposedly fooled European politicians was just a look-alike, say, pranksters, The Verge, 30 April 2021 quickly ended the deception. Vladimir Kuznetsov and Alexei Stolyarov (the man who looks like Volkov) have confessed that they carried out the deception with simple make-up and a well-chosen camera angle to deceive the interlocutors of the Zoom conference. The technique resembles that of the Yes Men ( The Yes Men, Framer Framed, dans “Doe does the right thing” 2004 )) and relies on a simple resemblance with the target of the manipulation.

The “deepfake” excuse

The case made a big splash in 2019, with the Wall Street Journal reporting that a company insured by Euler Hermes was extorted for $243,000 by someone using a deepfake audio 4 « Thieves Used Audio Deepfake of a CEO to Steal $243,000 », Vice, 2019. The name of the company was not disclosed at the time and this revelation reignited the security debate around the fraudulent use of deepfakes.

The Liar’s Dividend is a phenomenon where someone can get away with lying by saying that something is a “deepfake”.

However, considering the state of the technology at the time to create audio deepfakes, there was reason to question the veracity of the statements of the extorted company. During an interview with Nicolas Obin, lecturer at the Faculty of Sciences of Sorbonne University and researcher in the Sound Analysis and Synthesis team at Ircam, I conducted as part of my research, we talked about the case. He told me that he had doubts about the use of an audio deepfake and was more inclined to think that it was a classic telephone imposture.

Other allegations of the use of deepfakes have been proven false. President Ali Bongo in Gabon 5« Les “deepfakes”, arme de désinformation massive », Jeune Afrique, 11 avril 2019, Uighur musician Abdurehim Heyit 6 Abdurehim Heyit Chinese video “disproves Uighur musician’s death,” BBC, 11 février 2019, Algerian President Abdelmadjid Tebboune 7 Le « vrai » Président Tebboune aurait fait « une apparition » surprenante !, le 7 TV, 13 décembre 2020, the allegations about the use of deepfakes for political purposes are intensifying.

Deepfakes become the perfect alibi for manipulations, whether it is to minimize the responsibility of the gullible, to underline the perfidy of the opponents, to support the craziest conspiracy theories, or, more prosaically, to support the legitimacy of a dubious political action. Deepfakes have not finished playing a role in political communication. Their main objective: blurring the lines of reality, disrupting the reading of events, instilling doubts on both sides of the argument, or supporting a strategy to polarize the public debate for self-interest purposes. ⚠️ 8 Read the study by Kaylyn Jackson Schiff, Daniel Schiff et Natalia Bueno. Schiff, Kaylyn J, Daniel Schiff, and Natalia Bueno. 2021. “The Liar’s Dividend: The Impact of Deepfakes and Fake News on Trust in Political Discourse.” OSF. April 29. doi:10.17605/OSF.IO/QPXR8.

The echo chamber created by the online media amplifies the allegations and – one news chasing the other – crystallizes in the public mind an anxiety-provoking narrative around synthetic media and their deleterious role on democracy. As a result, a very imprecise and polarized image of the issue of deepfakes and their use permeates the public sphere, right up to the political leaders who – in reaction – imagine approximate or useless legislative solutions. 9 Olivia Grégoire a-t-elle raison de craindre que les deepfakes influencent les présidentielles en 2022 ? 16 april 2021.

The technocentric approach to deepfakes countermeasures, supported by some kind of technological solutionism pushed by big players such as Facebook or Microsoft, doesn’t help to solve this huge challenge that exploits the “liar’s dividend”. It seems illusory to search in these deepfakes detectors marketed by freshly created startups, a transversal answer to the problems raised by synthetic media.

The development of media literacy and particularly the impact of these new types of content seems to be more fruitful 10 Hwang Y, Ryu JY, Jeong SH. Effects of Disinformation Using Deepfake: The Protective Effect of Media Literacy Education. Cyberpsychol Behav Soc Netw. 2021 Mar; 24(3):188–193. doi: 10.1089/cyber.2020.0174. Epub 2021 Mar 1. PMID: 33646021. in giving the intellectual tools of analysis and contextualization necessary for citizens to correctly apprehend the digital environment in which they evolve.

The resulting empowerment frees individuals from a technical filter mainly controlled by private entities and favors the emergence of a collective awareness of the issues related to synthetic media and the use of artificial intelligence techniques.

Notes :